Basic Terms in Artficial Intelligence, Machine Learning and Data Science.

Artificial Intelligence(AI)

Let us begin with the term Intelligence. An intelligent being is one who can think for himself as opposed to someone just following orders (this would be a robot).

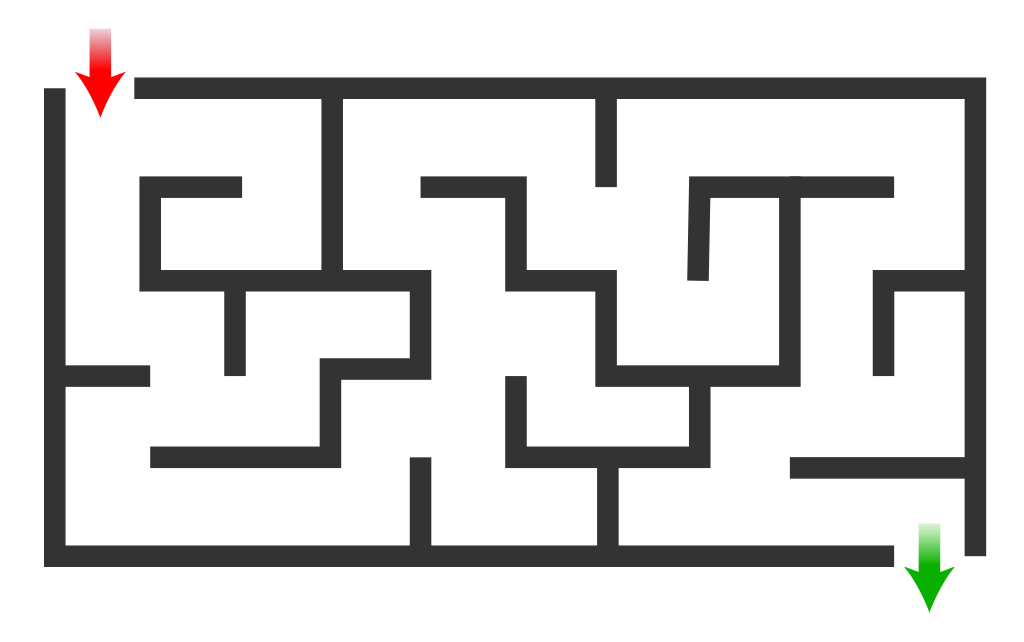

One of the best ways of explaining this is by the difference between crossing a maze and crossing an actual road.

A Maze Problem

Crossing a busy road

Crossing the maze can be programmed using a few if then else statements alongside a few loops. Crossing the road requires intelligence. You cannot describe it in if else terms like if the road is 30 ft wide and the nearest car is travelling at the speed of 50 mph and so forth. Humans, animals , birds etc have this intelligence, machines like computers, mobiles developing this intelligence is Atificial Intelligence AI.

AI is intelligence, i,e receiving, digesting, and detecting patterns. If machines do it its AI while humans/birds/animals doing it is I. Its use in CS includes Optical Character Recognition, Face Recognition, Translation, etc.

Machine Learning

is a system that can learn from data. Basically past inputs and outputs. Machine Learning Systems can make predictions and/or decisions. They do not have to be programmed for it. ML Systems can keep learning and change their behavior with changes in inputs and outputs. We have enumerated some applications above.

Supervised Learning

A software applcation is trained on a given data set.There will be inputs and outputs will be labelled. Based on this the software can make decisions on new data.

Example:

Unsupervised Learning

The program is given a data set which is unlabelled and it automatically discovers patterns and groupings. This is also called clustering. For example: given a dataset of products the program can automatically group it into categories. The same can be done with emails, books, etc

Classification

Classification is enumerating into categories.

Classification is of three types.

Binary. Things are classified into 2 groups yes and no. The simplest would be pass or fail, win or lose, watch the movie or not.

Multi-class. Multi-class differs from Binary in the sense that there are many possible groups. However, the outcome will still belong to one class only.

Some examples would be an exam result divisions like fail, third division, second division and first division. A movie classified as comedy, suspense, tragedy etc. A blog can be based on films, travel, literature etcMulti-label classification. Same as multi-class with the caveat that it can have more than one label. The labels are non exclusive, whereas in Multi-class they are exclusive. For instance we can have an email classified as non-spam and financial. A site can be a travel blog and also a foodie. Movies can belong to more than one genre.

Clustering

When you're trying to learn about something, say music, one approach might be to look for meaningful groups or collections. You might organize music by genre, while your friend might organize music by decade. How you choose to group items helps you to understand more about them as individual pieces of music. You might find that you have a deep affinity for punk rock and further break down the genre into different approaches or music from different locations. On the other hand, your friend might look at music from the 1980's and be able to understand how the music across genres at that time was influenced by the sociopolitical climate. In both cases, you and your friend have learned something interesting about music, even though you took different approaches.

In machine learning too, we often group examples as a first step to understand a subject (data set) in a machine learning system. Grouping unlabeled examples is called clustering.

As the examples are unlabeled, clustering relies on unsupervised machine learning. If the examples are labeled, then clustering becomes classification.

Decision Trees

A decision tree is a multilevel decision system. A site is a travel blog or a literary blog. If a literary blog then it is about novels or drama. If novels then suspense or romance.

Regression

Regression is supervised learning where we seek to predict values rather than answer questions in yes or no. Predicting temperatures, share prices, sales etc.

You can view an example here.

Artificial Neural Networks

An ANN is based on a collection of connected units or nodes called artificial neurons, which loosely model the neurons in a biological brain. Each connection, like the synapses in a biological brain, can transmit a signal to other neurons. An artificial neuron receives signals then processes them and can signal neurons connected to it. The "signal" at a connection is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs. The connections are called edges. Neurons and edges typically have a weight that adjusts as learning proceeds. The weight increases or decreases the strength of the signal at a connection. Neurons may have a threshold such that a signal is sent only if the aggregate signal crosses that threshold.

Typically, neurons are aggregated into layers. Different layers may perform different transformations on their inputs. Signals travel from the first layer (the input layer), to the last layer (the output layer), possibly after traversing the layers multiple times.

https://en.wikipedia.org/wiki/Artificial_neural_network

Deep Learning

is best defined on the IBM site.

Deep learning is a subset of machine learning, which is essentially a neural network with three or more layers. These neural networks attempt to simulate the behavior of the human brain—albeit far from matching its ability—allowing it to “learn” from large amounts of data. While a neural network with a single layer can still make approximate predictions, additional hidden layers can help to optimize and refine for accuracy.

Deep learning drives many artificial intelligence (AI) applications and services that improve automation, performing analytical and physical tasks without human intervention. Deep learning technology lies behind everyday products and services (such as digital assistants, voice-enabled TV remotes, and credit card fraud detection) as well as emerging technologies (such as self-driving cars).

https://www.ibm.com/cloud/learn/deep-learning

Natural Language Processing

Natural language processing (NLP) refers to the branch of computer science—and more specifically, the branch of artificial intelligence or AI—concerned with giving computers the ability to understand text and spoken words in much the same way human beings can.

NLP combines computational linguistics—rule-based modeling of human language—with statistical, machine learning, and deep learning models. Together, these technologies enable computers to process human language in the form of text or voice data and to ‘understand’ its full meaning, complete with the speaker or writer’s intent and sentiment.

NLP drives computer programs that translate text from one language to another, respond to spoken commands, and summarize large volumes of text rapidly—even in real time. There’s a good chance you’ve interacted with NLP in the form of voice-operated GPS systems, digital assistants, speech-to-text dictation software, customer service chatbots, and other consumer conveniences. But NLP also plays a growing role in enterprise solutions that help streamline business operations, increase employee productivity, and simplify mission-critical business processes.

Computer Vision

Computer vision is a field of artificial intelligence (AI) that enables computers and systems to derive meaningful information from digital images, videos and other visual inputs — and take actions or make recommendations based on that information. If AI enables computers to think, computer vision enables them to see, observe and understand.

Computer vision works much the same as human vision, except humans have a head start. Human sight has the advantage of lifetimes of context to train how to tell objects apart, how far away they are, whether they are moving and whether there is something wrong in an image.

Computer vision trains machines to perform these functions, but it has to do it in much less time with cameras, data and algorithms rather than retinas, optic nerves and a visual cortex. Because a system trained to inspect products or watch a production asset can analyze thousands of products or processes a minute, noticing imperceptible defects or issues, it can quickly surpass human capabilities.

Computer vision is used in industries ranging from energy and utilities to manufacturing and automotive – and the market is continuing to grow. It is expected to reach USD 48.6 billion by 2022.

Cognitive science in artificial intelligence

Cognitive science in artificial intelligence (AI) refers to the application of the principles and theories of cognitive science to the development of AI systems. This can include the use of cognitive models, which are computational representations of cognitive processes, to simulate human-like intelligence in machines. For example, cognitive architectures, such as ACT-R or SOAR, which are used to build intelligent agents, are based on cognitive science theories. In addition, cognitive scientists and AI researchers often collaborate to develop AI systems that can perform tasks that are typically associated with human intelligence such as natural language processing, problem solving, decision making and perception.